43 Top Free Data Mining Software

Data Mining is the computational process of discovering patterns in large data sets involving methods using the artificial intelligence, machine learning, statistical analysis, and database systems with the goal to extract information from a data set and transform it into an understandable structure for further use.

In today business market, the level of engagement between customers and companies, services or even product has changed. The companies have made their presence online prominent by becoming easily accessible through social platforms such as Facebook, Twitter, and WhatsApp. These platforms provide valuable data which is unstructured. That is a reason why most companies require Data Mining tools.

Data mining software allows different business to collect the information from a different platform and use the data for various purposes such as market evaluation and analysis. Data mining help the user to keep track of all the important data and make use of the data to improve the business. In addition, the software has become important in making informed decisions in a business setting.

Data mining software help explore the unknown patterns that are significant to the success of the business. The actual data mining task is an automatic analysis of large quantities of data to extract previously unknown, interesting patterns such as cluster analysis, unusual records (anomaly detection), and dependencies (association rule mining, sequential pattern mining).

Top Free Data Mining Software: Orange Data mining, Anaconda, R Software Environment, Scikit-learn, Weka Data Mining, Shogun, DataMelt, Natural Language Toolkit, Apache Mahout, GNU Octave, GraphLab Create, ELKI, Apache UIMA, KNIME Analytics Platform Community, TANAGRA, Rattle GUI, CMSR Data Miner, OpenNN, Dataiku DSS Community, DataPreparator, LIBLINEAR, Chemicalize.org, Vowpal Wabbit, mlpy, Dlib, CLUTO, TraMineR, ROSETTA, Pandas, Fityk, KEEL, ADaMSoft, Sentic API, ML-Flex, Databionic ESOM, MALLET, streamDM, ADaM, MiningMart, Modular toolkit for Data Processing, Jubatus, LIBSVM, Arcadia Data Instant are some of the top free data mining software.

What are Data Mining Software?

Data mining is the process of identifying patterns, analyzing data and transforming unstructured data into structured and valuable information that can be used to make informed business decisions. Data Mining Software allows the organization to analyze data from a wide range of database and detect patterns.

The Data Mining Tools main aim is to find data, extract data, refine data, distribute the information and monetize it. Data Mining is important because It extracts insights from data whether structured or unstructured. Structured data refers to data that has been organized into columns and rows for efficient modification.

Most of the organisations that handle a large amount of data use data mining approaches where machines learning algorithms are used. Data mining is a method used to extract hidden unstructured data from large volume databases. It identifies any hidden correlations, patterns and trends and indicates them.

Data mining cannot be purely be identified as statistical but as an interdisciplinary science that comprises computer science and mathematics algorithms depicted by a machine.

You may like to read: Top Data Mining Software

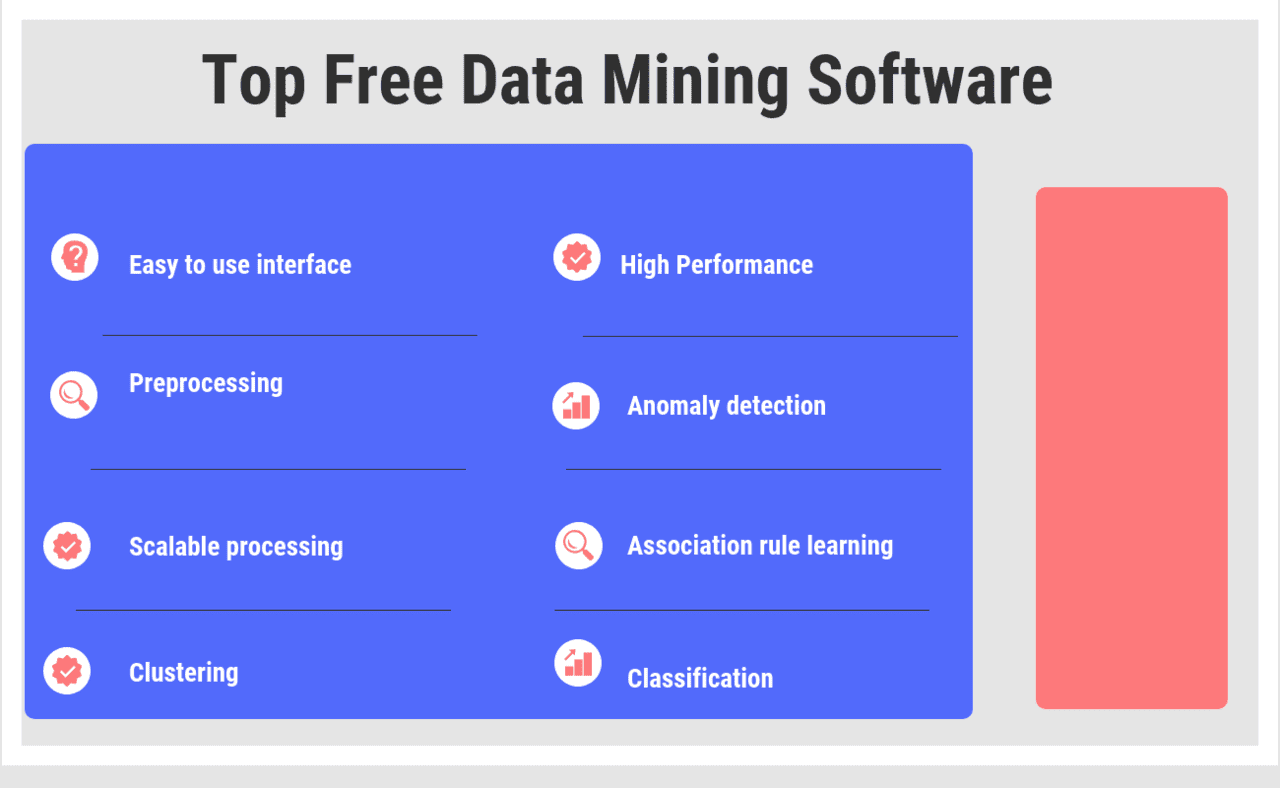

- Easy to use interface: Data mining software has easy to use GUI that allow quick analysis of data.

- Preprocessing: Data preprocessing is an important step in data mining as it is a process that involves the transformation of raw data into an understandable format. It involves data cleaning where missing values and inconsistency are resolved. Data integration and transformation are also stepping in Data Preprocessing.

- Scalable processing: data mining software allow scalable processing. This is from a single user system to a large organization processing. In other words, the software us scalable on the number of users and the size of data to be processed.

- High Performance: Data mining software boost performance capabilities through high-performance data mining nodes, especially in companies that deal with a large amount of data. The mining tools develop an environment that leads to a faster generation of business results.

- Anomaly detection: The identification of unusual data records, that might be interesting or data errors that require further investigation.

- Association rule learning: Searches for relationships between variables..

- Clustering: The task of discovering groups and structures in the data that are in some way or another "similar", without using known structures in the data.

- Classification: The task of generalizing known structure to apply to new data.

- Regression: Attempts to find a function which models the data with the least error that is, for estimating the relationships among data or datasets.

- Data Summarization: Data mining tools should be able to compress data into an informative representation. Often, methods such as tabulation are the common techniques used to summarize large dataset. The software provides interactive data preparation tools.

Top Free Data Mining Software

Top Free Data Mining Software

Orange Data mining

Orange is an open source data visualization and analysis tool. Orange is developed at the Bioinformatics Laboratory at the Faculty of Computer and Information Science, University of Ljubljana, Slovenia, along with open source community. Data mining is done through visual programming or Python scripting. The tool has components for machine learning, add-ons for bioinformatics and text mining and it is packed with features for data analytics. Orange is a Python library. Python scripts can run in a terminal window, integrated environments like PyCharm and PythonWin, or shells like iPython. Orange consists of a canvas interface onto which the user places…

• Open Source

• Interactive Data Visualization

• Visual Programming

• Supports Hands-on Training and Visual Illustrations

• Add-ons Extend Functionality

Free

• Open Source

• Interactive Data Visualization

• Visual Programming

•For everyone- beginners and professionals

•Execute simple and complex data analysis

•Create beautiful and interesting graphics

Anaconda

Anaconda is an open data science platform powered by Python. The open source version of Anaconda is a high performance distribution of Python and R and includes over 100 of the most popular Python, R and Scala packages for data science. There is also access to over 720 packages that can easily be installed with conda, the package, dependency and environment manager, that is included in Anaconda. Includes the most popular Python, R & Scala packages for stats, data mining, machine learning, deep learning, simulation & optimization, geospatial, text & NLP, graph & network, image analysis. Featured packages include: NumPy,…

• Analytics Workflows

• Analytics Interaction

• High Performance Distribution

• Data Engineering

• Advanced Analytics

• High Performance Scale Up

• Reproducibility

• Analytics Deployment

Contact for Pricing

• Analytics Workflows

• Analytics Interaction

• High Performance Distribution

• Accelerate streamline of data science workflow from ingest through deployment

• Connect all data sources to extract the most value from data

• Create, collaborate and share with the entire team

R Software Environment

R is a free software environment for statistical computing and graphics. It compiles and runs on a wide variety of UNIX platforms, Windows and MacOS. R is an integrated suite of software facilities for data manipulation, calculation and graphical display. Some of the functionalities include an effective data handling and storage facility, a suite of operators for calculations on arrays, in particular matrices, a large, coherent, integrated collection of intermediate tools for data analysis, graphical facilities for data analysis and display either directly at the computer or on hardcopy, and well developed, simple and effective programming language which includes conditionals,…

• Open Source - Free Software

• Provides a wide variety of Statistical (linear and nonlinear modeling, classical statistical tests, time-series analysis, classification, clustering) and Graphical Techniques

• Effective data handling and storage facility

• Suite of operators for calculations on arrays, in particular matrices

• Large, coherent, integrated collection of intermediate tools for data analysis

• Graphical facilities for data analysis and display either on-screen or on hardcopy

• Well-developed, simple and effective programming language which includes conditionals, loops, user-defined recursive functions and input and output facilities

Free

• Open Source - Free Software

• Provides a wide variety of Statistical (linear and nonlinear modeling, classical statistical tests, time-series analysis, classification, clustering) and Graphical Techniques

• Effective data handling and storage facility

• Brings analytics to your data

• Runs on a wide variety of platforms- UNIX, Windows, MacOS

• Widely used statistical software

Scikit-learn

Scikit-learn is an open source machine learning library for the Python programming language.It features various classification, regression and clustering algorithms including support vector machines, random forests, gradient boosting, k-means and DBSCAN, and is designed to interoperate with the Python numerical and scientific libraries NumPy and SciPy. Classification : Identifying to which category an object belongs to Applications: Spam detection, Image recognition. Algorithms: SVM, nearest neighbors, random forest. Regression : Predicting a continuous-valued attribute associated with an object. Applications: Drug response, Stock prices. Algorithms: SVR, ridge regression. Clustering :Automatic grouping of similar objects into sets. Applications: Customer segmentation, Grouping experiment outcomes.…

Weka Data Mining

Weka is a collection of machine learning algorithms for data mining tasks. The algorithms can either be applied directly to a dataset or called from your own Java code. Weka features include machine learning, data mining, preprocessing, classification, regression, clustering, association rules, attribute selection, experiments, workflow and visualization. Weka is written in Java, developed at the University of Waikato, New Zealand. All of Weka's techniques are predicated on the assumption that the data is available as a single flat file or relation, where each data point is described by a fixed number of attributes Weka provides access to SQL databases…

• Data Pre-Processing

• Data Classification

• Data Regression

• Data Clustering

• Data Association rules

• Data Visualization

Free

• Data Pre-Processing

• Data Classification

• Data Regression

•Portable

•Free to use

•Easy to use

Shogun

Shogun is a free, open source toolbox written in C++. It offers numerous algorithms and data structures for machine learning problems. The focus of Shogun is on kernel machines such as support vector machines for regression and classification problems. Shogun also offers a full implementation of Hidden Markov models. The toolbox seamlessly allows to easily combine multiple data representations, algorithm classes, and general purpose tools. This enables both rapid prototyping of data pipelines and extensibility in terms of new algorithms. It now offers features that span the whole space of Machine Learning methods, including many classical methods in classification, regression,…

• Free software, community-based development and machine learning education

• Supports many languages from C++, Python, Octave, R, Java, Lua, C#, Ruby, Etc.

• Runs natively under Linux/Unix, Macos, and Windows

• Provides efficient implementation of all standard ml algorithms

• Libsvm/Liblinear, Svmlight, Libocas, Libqp, Vowpalwabbit, Tapkee, Slep, Gpml and more

Free

• Free software, community-based development and machine learning education

• Supports many languages from C++, Python, Octave, R, Java, Lua, C#, Ruby, Etc.

• Runs natively under Linux/Unix, Macos, and Windows

•Completely free to use

•Goes on many operating systems

•Works on different platforms

DataMelt

DataMelt, or DMelt, is a software for numeric computation, statistics, analysis of large data volumes ("big data") and scientific visualization. The program can be used in many areas, such as natural sciences, engineering, modeling and analysis of financial markets. DMelt is a computational platform. It can be used with different programming languages on different operating systems. Unlike other statistical programs, it is not limited by a single programming language. DMelt can be used with several scripting languages, such as Python/Jython, BeanShell, Groovy, Ruby, as well as with Java. Most comprehensive software. It includes more than 30,000 Java classes for computation…

•DMelt with all jar libraries and IDE. Mixed GPL and non-GPL licences (180 MB size)

•Online manual (basic introduction)

•Access to Java API of DMelt core library (600 classes)

•Community forum and bug tracker

•Updates of separate jar files via DMelt IDE NO YES YES

•Full version of DMelt manual

•Access to Java API (30,000 classes) with full search

•Access to Image gallery with code examples

•Web access to more than 500 DMelt examples with searchable database

Many features are free. For all the features the user must pay for memebership.

•DMelt with all jar libraries and IDE. Mixed GPL and non-GPL licences (180 MB size)

•Online manual (basic introduction)

•Access to Java API of DMelt core library (600 classes)

•Access to Java API of DMelt core library

•Community forum and bug tracker

•Access to Image gallery with code examples

Natural Language Toolkit

NLTK is a leading platform for building Python programs to work with human language data. It provides easy-to-use interfaces to over 50 corpora and lexical resources such as WordNet, along with a suite of text processing libraries for classification, tokenization, stemming, tagging, parsing, and semantic reasoning, wrappers for industrial-strength NLP libraries, and an active discussion forum. Thanks to a hands-on guide introducing programming fundamentals alongside topics in computational linguistics, plus comprehensive API documentation, NLTK is suitable for linguists, engineers, students, educators, researchers, and industry users alike. NLTK is available for Windows, Mac OS X, and Linux. Best of all, NLTK…

•Feature structure types

•Parsing feature structure strings

•Feature paths

•Reentrance

•Text classification

Free

•Feature structure types

•Parsing feature structure strings

•Feature paths

• Tokenization

• Stemming

• Tagging

Apache Mahout

The Apache Mahout project’s goal is to build an environment for quickly creating scalable performant machine learning applications. Apache Mahout is a simple and extensible programming environment and framework for building scalable algorithms and contains a wide variety of premade algorithms for Scala and Apache Spark, H2O, Apache Flink. It also used Samsara which is a vector math experimentation environment with R-like syntax which works at scale. Apache™ Mahout is a library of scalable machine-learning algorithms, implemented on top of Apache Hadoop and using the MapReduce paradigm. While Mahout's core algorithms for clustering, classification and batch based collaborative filtering are…

•Collaborative filtering

•Clustering

•Classification

•Frequent itemset timing

•Distributed Algebraic optimizer

•R-Like DSL Scala API

•Linear algebra operations

Free

•Distributed Algebraic optimizer

•R-Like DSL Scala API

•Linear algebra operations

• Access to extensible programming framework

• Build scalable algorithms

• Access many premade algorithms

GNU Octave

GNU Octave represents a high level language intended for numerical computations. Because of its command line interface, users can solve linear and nonlinear problems numerically and perform other numerical experiments through a language that is mostly compatible with Matlab. This software has features such as powerful mathematics-oriented syntax with built-in plotting and visualization tools, it is free software which runs on GNU/Linux, macOS, BSD, and Windows, compatible with many Matlab scripts. A syntax which is largely compatible with Matlab is the Octave syntax. It can be run in several ways - in GUI mode, as a console, or invoked as…

•High level language intended for numerical computations

•Solving linear and nonlinear problems numerically

•Powerful mathematics-oriented syntax

•Runs on GNU/Linux, macOS, BSD, and Windows

•Freely redistributable

Free

•High level language intended for numerical computations

•Solving linear and nonlinear problems numerically

•Powerful mathematics-oriented syntax

•Drop-in compatible with Matlab scripts

•Comprehensive help installation

•Built-in plotting and visualization tools

GraphLab Create

GraphLab Create is a machine learning platform to build intelligent, predictive application involving cleaning the data, developing features, training a model, and creating and maintaining a predictive service. These intelligent applications provide predictions for use cases including recommenders, sentiment analysis, fraud detection, churn prediction and ad targeting. Trained models can be deployed on Amazon Elastic Compute Cloud (EC2) and monitored through Amazon CloudWatch. They can be queried in real-time via a RESTful API and the entire deployment pipeline is seen through a visual dashboard. The time from prototyping to production is dramatically reduced for GraphLab Create users. Dato is also…

• Scalable Data Structures

• Deep Learning

• Image Analytics

• Model Optimization

• Feature Engineering

• Nearest Neighbors

• Regression

• Machine Learning Visualizations

• Anomaly Detection

• Text Analytics

• Clustering

• C++ SDK Plugin Architecture

• Classification

• Pattern Mining

• Graph Analytics

Contact for Pricing

• Scalable Data Structures

• Deep Learning

• Image Analytics

• Predict the likelihood that customers will churn, understand the influential factors, and take action to prevent it from happening

• Transform images for tagging, search, and feature extraction

• Compose and share data pipelines

ELKI

The ELKI framework is written in Java and built around a modular architecture. Most currently included algorithms belong to clustering, outlier detection and database indexes. A key concept of ELKI is to allow the combination of arbitrary algorithms, data types, distance functions and indexes and evaluate these combinations. When developing new algorithms or index structures, the existing components can be reused and combined. ELKI is modeled around a database core, which uses a vertical data layout that stores data in column groups (similar to column families in NoSQL databases). This database core provides nearest neighbor search, range/radius search, and distance…

• Open source data mining software

• High performance and scalability

• Simple visualization window

• Data management tasks

• Standard Java API

Free

• Open source data mining software

• High performance and scalability

• Simple visualization window

• JAVA data mining software

• Allows R code

• Data mining and data management are worked as separate tasks

Apache UIMA

Unstructured Information Management applications are software systems that analyze large volumes of unstructured information in order to discover knowledge that is relevant to an end user. An example UIM application might ingest plain text and identify entities, such as persons, places, organizations; or relations, such as works-for or located-at UIMA enables applications to be decomposed into components, for example "language identification" => "language specific segmentation" => "sentence boundary detection" => "entity detection (person/place names etc.)". Each component implements interfaces defined by the framework and provides self-describing metadata via XML descriptor files. The framework manages these components and the data flow…

•Infrastructe

•Components

•Frameworks

• Development source code issue management

• Tooling

• Servers

KNIME Analytics Platform Community

KNIME Analytics Platform is the leading open solution for data-driven innovation, helping you discover the potential hidden in your data, mine for fresh insights, or predict new futures. With more than 1000 modules, hundreds of ready-to-run examples, a comprehensive range of integrated tools, and the widest choice of advanced algorithms available, KNIME Analytics Platform is the perfect toolbox for any data scientist. A vast arsenal of native nodes, community contributions, and tool integrations makes KNIME Analytics Platform the perfect toolbox for any data scientist. https://www.youtube.com/watch?v=fw0Vb2gLsgA

• Powerful Analytics

• Data & Tool Blending

• Open Platform

• Over 1000 Modules and Growing

•Connectors for all major file formats and databases

•Support for a wealth of data types: XML, JSON, images, documents, and many more

•Native and in-database data blending & transformation

•Math & statistical functions

•Advanced predictive and machine learning algorithms

•Workflow control

•Tool blending for Python, R, SQL, Java, Weka, and many more

•Interactive data views & reporting

Free

•Native and in-database data blending & transformation

•Math & statistical functions

•Advanced predictive and machine learning algorithms

• Churn analysis

• Social media sentiment analysis

• Credit scoring

KNIME Analytics Platform Community

TANAGRA

Tanagra represents free data mining software for academic and research purposes. It provides several data mining methods from exploratory data analysis, statistical learning, machine learning and databases area. It is a successor of SIPINA which means that various supervised learning algorithms are provided, especially an interactive and visual construction of decision trees. Because it contains supervised learning but also other paradigms such as clustering, factorial analysis, parametric and nonparametric statistics, association rule, feature selection and construction algorithms, Tanagra is very powerful. The main goal of this project is giving researchers and student’s easy-to-use data mining software and second goal is…

•Free data mining software for academic and research purposes

•Provides several data mining methods from exploratory data analysis, statistical learning, machine learning and databases area

•Acts more as an experimental platform

•Open source project

Free

•Free data mining software for academic and research purposes

•Provides several data mining methods from exploratory data analysis, statistical learning, machine learning and databases area

•Acts more as an experimental platform

• Easy to use data mining software

• Interactive utilization

• A wide set of data sources

Rattle GUI

Rattle is Free Open Source Software and the source code is available from the Bitbucket repository. Rattle gives the user the freedom to review the code, use it for whatever purpose the user likes, and to extend it however they like, without restriction. Rattle is a popular GUI for data mining using R. It presents statistical and visual summaries of data, transforms data that can be readily modelled, builds both unsupervised and supervised models from the data, presents the performance of models graphically, and scores new datasets. One of the most important features is that all of the user’s interactions…

•File Inputs

•Statistics

•Statistical tests

•Clustering

•Modeling

•Evaluation

•Charts

•Transformations

Free

•File Inputs

•Statistics

•Statistical tests

• Learn and develop skills in R

• Provides ease of use

• Build your own models

CMSR Data Miner

StarProbe Data Miner or CMSR Data Miner Suite is software which provides an integrated environment for predictive modeling, segmentation, data visualization, statistical data analysis, and rule-based model evaluation. For advanced power users integrated analytics and rule-engine environment is also provided. This software has many features such as: deep learning modeling RME-EP which represents very powerful expert system shell rule engine, supporting predictive modeling such as neural network, self organizing maps, decision tree, regression etc. It has been developed to use SQL-like expressions which users can learn very easily and quickly. Also, RME-EP expert system rules can be written by non-IT…

• Deep Learning Modeling (RME-EP).

• Neural network (multi-hidden layer deep neural network support).

• Neural clustering and segmentation (Self Organizing Maps: SOM).

• (Cramer) Decision tree classification and Segmentation.

• Hotspot drill-down and profiling analysis.

• Regression.

• Business rules - Predictive expert systems shell rule engine.

• Rule-based predictive model evaluation.

• Powerful charts: 3D bars, bars, histograms, histobars, scatterplots, boxplots ...

• Segmentation and gains analysis.

• Response and profit analysis.

• Correlation analysis.

• Cross-sell Basket Analysis.

• Drill-down statistics.

• Cross tables with deviation/hotspot analysis.

• Groupby tables with deviation/hotspot analysis.

• SQL query/batch tools.

• Statistics: Mono, Bi, ANOVA, ...

• Database scoring: Apply predictive/segmentation models to database records).

• Connect to all major relational DBMS through JDBC.

• Data Miner optimized for MicroSoft MS SQL Server, MySQL, PostgreSQL, MS Office Access.

• Deploy/publish predictive models on Android phones and Android tablets with MyDataSay app. For downloads, click here.

• Database table import/export tools (Support character strings, integer and real numbers).

• Super fast and Big data (upto 2 billion records).

• Runs on multiple OS: Windows, Linux, Mac OS X, AIX, Solaris, HPUX.

"

1 year free license for evaluation

Free academic use

• Deep Learning Modeling (RME-EP).

• Neural network (multi-hidden layer deep neural network support).

• Neural clustering and segmentation (Self Organizing Maps: SOM).

• Perform statistical data analysis quickly

• Drill down into the most complex data

• Access visualization tools for data results

OpenNN

OpenNN is an open source class library written in C++ programming language which implements neural networks, a main area of machine learning research. The library implements any number of layers of non-linear processing units for supervised learning. This deep architecture allows the design of neural networks with universal approximation properties. The main advantage of OpenNN is its high performance. It is developed in C++ for better memory management and higher processing speed, and implements CPU parallelization by means of OpenMP and GPU acceleration with CUDA. OpenNN has been written in ANSI C++. This means that the library can be built…

• Unified Modeling Language (UML)

• OpenNN is based on the multilayer perceptron

• The loss index

Free

• Unified Modeling Language (UML)

• OpenNN is based on the multilayer perceptron

• The loss index

•Technology evaluation

•Proof of concept

•Design and implementation

Dataiku DSS Community

Dataiku DSS is the collaborative data science software platform for teams of data scientists, data analysts, and engineers to explore, prototype, build, and deliver their own data products more efficiently. Dataiku develops the unique advanced analytics software solution that enables companies to build and deliver their own data products more efficiently. Dataiku DSS is a collaborative and team-based user interface for data scientists and beginner analysts, to a unified framework for both development and deployment of data projects, and to immediate access to all the features and tools required to design data products from scratch. The visual interface of Dataiku…

•Data connectors

•Data transformation

•Transformation engines

•Data Visualization

•Data Mining

•Machine Learning

Free

•Data connectors

•Data transformation

•Transformation engines

•Connect to more than 25 data storage systems

•Extend with plugins

•Visualize and re-run Workflows

DataPreparator

DataPreparator is a free software tool which is designed to assist with common tasks of data preparation (or data preprocessing) in data analysis and data mining. DataPreparator offers features such as character removal, text replacement, date conversion, remove selected attributes, move selected attributes, equal width, equal frequency, equal frequency from grouped data, delete records containing missing values, remove attributes containing missing values, impute missing values, predict missing values from model (dependence tree, Naive Bayes model), include missing value patterns, Z-score metho. Box-plot method, create binary attributes, replace nominal values by indices, reduce number of labels, decimal, linear, hyperbolic tangent, soft-max,…

• Data access from text files, relational databases, and Excel workbooks

• Handling of large volumes of data (since data sets are not stored in the computer memory, with the exception of Excel workbooks and result sets of some databases where database drivers do not support data streaming)

• Stand alone tool, independent of any other tools

• User friendly graphical user interface

• Operator chaining to create sequences of preprocessing transformations (operator tree)

• Creating of model tree for test/execution data

• Free

• Data access from text files, relational databases, and Excel workbooks

• Handling of large volumes of data (since data sets are not stored in the computer memory, with the exception of Excel workbooks and result sets of some databases where database drivers do not support data streaming)

• Stand alone tool, independent of any other tools

• Provides a variety of techniques for data cleaning, transformation, and exploration

• Chaining of preprocessing operators into a flow graph (operator tree)

• Handling of large volumes of data (since data sets are not stored in the computer memory)

LIBLINEAR

LIBLINEAR is an open source library that is used by data scientists, developers and end users to perform large scale linear classification. The easy to use command tools and library calls enables LIBLINEAR to be used by data scientists and developers to perform logistics, regression and linear support for vector machine. With LIBLINEAR developers and data scientists are able to same data format as the one in LIBSVM found in LINLINEAR general purpose SVM solver which also has similar usage. LINLINEAR presents several machine language interfaces that can be used by data scientists and developers. The machine language interfaces presented…

• Multi-class classification: 1) one-vs-the rest. 2) Crammer& Singer

• Cross validation for model evaluation

• Automatic parameter selection

• Probability estimates (logistic regression only)

• Weights for unbalanced data

• MATLAB/Octave, Java, Python, Ruby interfaces

Contact for Pricing

• Cross validation for model evaluation

• Automatic parameter selection

• Probability estimates (logistic regression only)

• Speedups the training on shared memory systems

• Supports L2-regularized classifiers

• Supports L2-loss linear SVR and L1-loss linear SVR

Chemicalize.org

Chemicalize provides instant cheminformatics solution. It is a powerful online platform for chemical calculations, search, and text processing. Calculation view provides structure-based predictions for any molecule structure. Available calculations include elemental analysis, names and identifiers, pKa, logP/logD, as well as solubility. Search view lets you perform text-based and structure-based searches against the Chemicalize database to find web page sources and associated structures of the results. You can even combine text-based and structural queries to achieve advanced search capabilities. Web viewer displays any web page with chemical structures highlighted on it. Recognized formats are IUPAC names, common names, InChI, and SMILES…

•Calculations

•Chemical search

•Webpage annotation

•Compliance checker

Free. Some features will cost. Visit webstie for further payment details.

•Calculations

•Chemical search

•Webpage annotation

•Calculations

•Chemical Search

•Web Page Annotation

Vowpal Wabbit

The Vowpal Wabbit (VW) project is a fast out-of-core learning system sponsored by Microsoft Research and (previously) Yahoo! Research. Support is available through the mailing list. There are two ways to have a fast learning algorithm: (a) start with a slow algorithm and speed it up, or (b) build an intrinsically fast learning algorithm. This project is about approach (b), and it's reached a state where it may be useful to others as a platform for research and experimentation. There are several optimization algorithms available with the baseline being sparse gradient descent (GD) on a loss function (several are available),…

•Input format

•Speed

•Scalability

•Input format

•Speed

•Scalability

mlpy

Mlpy know as Machine Learning Python represents a python method for machine learning built on top of NumPy/SciPy (Python-based ecosystem of open-source software for mathematics, science, and engineering) and the GNU Scientific Libraries (represents numerical library for C and C++ programmers where a wide range of mathematical routines such as random number generators, special functions and least-squares fitting are provided). Wide range of state-of-the-art machine learning methods are provided for supervised and unsupervised problems and mlpy is aimed at finding a reasonable compromise among modularity, maintainability, reproducibility, usability and efficiency. It provides high-level functions and classes allowing, with few lines…

•Python method for machine learning

•Provides high-level functions and classes

•Works with Python 2 and 3

•Open Source

•Compatible with PyInstaller

Free

•Python method for machine learning

•Provides high-level functions and classes

•Works with Python 2 and 3

• Provides a wide range of machine learning methods

• Perform many statistical analyses

• Easy to manipulate data

Dlib

Dlib is a modern C++ toolkit which contains machine learning algorithms and tools in order of creating complex software in C++ for solving real world problems. It is used in a wide range of domains including robotics, embedded devices, mobile phones, and large high performance computing environments. It is free of any charges which mean that users can use it in any app. Major features of Dlib is: documentation – it provides complete and precise documentation for every class and function, lots of example programs are provided; high quality portable code – good unit test coverage, tested on MS Windows,…

•Contains machine learning algorithms and tools in order of creating complex software in C++ for solving real world problems

•Provides complete and precise documentation for every class and function

•High quality portable code

•Graphical model inference algorithms

Free

•Contains machine learning algorithms and tools in order of creating complex software in C++ for solving real world problems

•Provides complete and precise documentation for every class and function

•High quality portable code

• Documentation for every class and function

• Debugging modes that check documented preconditions for functions

• Good unit test coverage

CLUTO

Cluto is software package intended for clustering low- and high-dimensional datasets and for analyzing the characteristics of the various clusters. It is well-suited for clustering data sets, arisen in many diverse application areas including information retrieval, customer purchasing transactions, web, GIS, science, and biology. CLUTO's distribution consists of both stand-alone programs and a library via which an application program can access directly the various clustering and analysis algorithms implemented in CLUTO. This software has several features such as multiple classes of clustering algorithms – partitional, agglomerative, & graph-partitioning based; multiple similarity/distance functions – Euclidean distance, cosine, correlation coefficient, extended Jaccard,…

•Intended for clustering low- and high-dimensional datasets and for analyzing the characteristics of the various clusters

•Multiple classes of clustering algorithms

•Multiple methods for effectively summarizing the clusters

•Can scale to very large datasets

Free

•Intended for clustering low- and high-dimensional datasets and for analyzing the characteristics of the various clusters

•Multiple classes of clustering algorithms

•Multiple methods for effectively summarizing the clusters

• Can scale to very large datasets containing hundreds of thousands of objects and tens of thousands of dimensions.

• Multiple methods for effectively summarizing the clusters.

• Extensive cluster visualization capabilities and output options.

TraMineR

TraMineR represents R-package (free software environment for statistical computing and graphics which compiles and runs on a wide variety of platforms such as UNIX platforms, Windows and MacOS) intended for mining, describing and visualizing sequences of states or events, and more generally discrete sequence data. Analysis of biographical longitudinal, data such as data describing careers or family trajectories, in the social sciences is its primary goal. This platform has many features that can apply in many other kinds of categorical sequence data. These features include: handling of longitudinal data and conversion between various sequence formats; plotting sequences (density plot, frequency…

•Intended for mining, describing and visualizing sequences of states or events, and more generally discrete sequence data

•Individual longitudinal characteristics of sequences

•Sequence transversal characteristics by age point

•Parallel coordinate plot of event sequences

•Identifying most discriminating event subsequences

Free

•Intended for mining, describing and visualizing sequences of states or events, and more generally discrete sequence data

•Individual longitudinal characteristics of sequences

•Sequence transversal characteristics by age point

• Visualize sequence data sets

• Explore the sequence data set by computing and visualizing descriptive statistics

• Build a typology of transitions from school to work

ROSETTA

ROSETTA is a toolkit for analyzing tabular data within the framework of rough set theory. It is designed for supporting the overall data mining and knowledge discovery process: From initial browsing and preprocessing of the data, via computation of minimal attribute sets and generation of if-then rules or descriptive patterns, to validation and analysis of the induced rules or patterns. This toolkit is not specifically towards any particular application domain, it is intended as a general-purpose tool for discernibility-based modeling. Highly intuitive GUI environment is offered and in this environment data-navigational abilities are emphasized. The main orientation of GUI is…

•Toolkit for analyzing tabular data within the framework of rough set theory

•Intended as a general-purpose tool for discernibility-based modeling

•Import/export – partial integration with DBMSs via ODBC

•Completion of decision tables with missing values

Free

•Toolkit for analyzing tabular data within the framework of rough set theory

•Intended as a general-purpose tool for discernibility-based modeling

•Import/export – partial integration with DBMSs via ODBC

• Import/export

• Preprocessing

• Computation

Pandas

Pandas is an open source, BSD-licensed library providing high-performance, easy-to-use data structures and data analysis tools for the Python programming language. Pandas is a NUMFocus sponsored project. This will help ensure the success of development of pandas as a world-class open-source project, and makes it possible to donate to the project. Best way to get pandas is to install via conda Builds for osx-64,linux-64,linux-32,win-64,win-32 for Python 2.7, Python 3.4, and Python 3.5 are all available. This is a major release from 0.19.2 and includes a number of API changes, deprecations, new features, enhancements, and performance improvements along with a large…

•New .agg() API for Series/DataFrame similar to the groupby-rolling-resample API’s,

•Integration with the feather-format, including a new top-level pd.read_feather() and DataFrame.to_feather() methodThe .ix indexer has been deprecated,

•Panel has been deprecated

•Addition of an IntervalIndex and Interval scalar type,

•Improved user API when accessing levels in .groupby(),

•Improved support for UInt64 dtypes, A new orient for JSON serialization, orient='table' that uses the Table Schema spec,

•Experimental support for exporting DataFrame.style formats to Excel

•Window Binary Corr/Cov operations now return a MultiIndexed DataFrame rather than a Panel, as Panel is now deprecated,

•Support for S3 handling now uses s3fs,

•Google BigQuery support now uses the pandas-gbq library

•Switched the test framework to use pytest

Free

•Improved user API when accessing levels in .groupby(),

•Improved support for UInt64 dtypes, A new orient for JSON serialization, orient='table' that uses the Table Schema spec,

•Experimental support for exporting DataFrame.style formats to Excel

• Perform fast, efficient data manipulation

• Access easy-to-use data structures

• Intelligent alignment of data

Fityk

Fityk is a program for data processing and nonlinear curve fitting. It is primarily used by scientists who analyse data from powder diffraction, chromatography, photoluminescence and photoelectron spectroscopy, infrared and Raman spectroscopy, and other experimental techniques and also used to fit peaks – bell-shaped functions (Gaussian, Lorentzian, Voigt, Pearson VII, bifurcated Gaussian. EMG, Doniach-Sunjic, etc.), but it is suitable for fitting any curve to 2D (x,y) data. Fityk has the following features for users; intuitive graphical interface (and also command line interface), support for many data file formats, thanks to the xylib library, dozens of built-in functions and support for…

• Intuitive graphical interface (and also command line interface),

• Support for many data file formats, thanks to the xylib library,

• Dozens of built-in functions and support for user-defined functions,

• Equality constraints,

• Ftting systematic errors of the x coordinate of points

• Manual, graphical placement of peaks and auto-placement using peak detection algorithm,

• Various optimization methods

• Handling series of datasets,

• Automation with macros (scripts) and embedded Lua for more complex scripting

• Open source licence (GPLv2+).

•1 month subscription: $115 (≈ €90)

•1 year subscription: $199 (≈ €150)

•2 years subscription: $299 (≈ €225)

• Handling series of datasets,

• Automation with macros (scripts) and embedded Lua for more complex scripting

• Open source licence (GPLv2+).

• Operate on an intuitive graphical interface

• Support for many data file formats

• Access to dozens of built-in functions

KEEL

KEEL (Knowledge Extraction based on Evolutionary Learning) is an open source (GPLv3) Java software tool that can be used for a large number of different knowledge data discovery tasks. KEEL provides a simple GUI based on data flow to design experiments with different datasets and computational intelligence algorithms (paying special attention to evolutionary algorithms) in order to assess the behavior of the algorithms. It contains a wide variety of classical knowledge extraction algorithms, preprocessing techniques (training set selection, feature selection, discretization, imputation methods for missing values, among others), computational intelligence based learning algorithms, hybrid models, statistical methodologies for contrasting experiments…

•Evolutionary Algorithms (EAs)

•Data pre-processing algorithms

•Statistical library

•User-friendly interface, oriented to the analysis of algorithms.

•Allows to create experiments in on-line mode, aiming an educational support in order to learn the operation of the algorithms included.

•Knowledge Extraction Algorithms Library. The main employment lines are:

•Different evolutionary rule learning models have been implemented

•Fuzzy rule learning models with a good trade-off between accuracy and interpretability.

•Evolution and pruning in neural networks, product unit neural networks, and radial base models.

•Genetic Programming: Evolutionary algorithms that use tree representations for extracting knowledge.

•Algorithms for extracting descriptive rules based on patterns subgroup discovery have been integrated.

•Data reduction (training set selection, feature selection and discretization). EAs for data reduction have been included.

Free

•Allows to create experiments in on-line mode, aiming an educational support in order to learn the operation of the algorithms included.

•Knowledge Extraction Algorithms Library. The main employment lines are:

•Different evolutionary rule learning models have been implemented

• Data management

• Design of experiments

• Design of imbalanced experiments

ADaMSoft

ADaMSoft is a free and open-source system for data management, data and web mining, statistical analysis. ADaMSoft offers procedures such as Principal component analysis, Text mining, Web Mining, Analysis of three ways time arrays, Linear regression with fuzzy dependent variable, Utility, Synthesis table, Import a data table (file) in ADaMSoft (create a dictionary), Charts, Neural network (MLP), Association measures for qualitative variables. Linear algebra, Evaluate the results of function approximation, Data Management, Function fitting, Error localization and data imputation, Decision trees, Statistics on quantitative variables, Record linkage, Evaluate the result of classification models, Cluster analysis (k-means method), Correspondence analysis, Data…

• Use the same package in different platforms; you just need to have installed the Java Runtime Environment

• Obtain a single product for Data Integration, Analytical ETL, Data Analysis, Reporting,...

• Consider a powerful syntax to recode, modify, transform your data, that is based on the Java language, enriched with many functions that access data sets

• Easily access to the most common data sources and associated to them proper meta data

• Use hundreds of statistical procedures to analyze your data, to visualize their internal relations, etc.

• Free

• Use the same package in different platforms; you just need to have installed the Java Runtime Environment

• Obtain a single product for Data Integration, Analytical ETL, Data Analysis, Reporting,...

• Consider a powerful syntax to recode, modify, transform your data, that is based on the Java language, enriched with many functions that access data sets

Sentic API

Sentic API provides the semantics and sentics such as the denotative and connotative information associated with the concepts of SenticNet 4, a semantic network of commonsense knowledge that contains 50,000 nodes in words and multiword expressions and thousands of connections in relationships between nodes. Sentic API is available in 40 different languages and lets users selectively access the latest version of the knowledge base online. Since polarity detection is the most common sentiment analysis task, Sentic API provides two fine-grained commands for it.

• Denotative and connotative information

• Return only semantics, sentics, moodtags, and polarity

• Available in 40 different languages

• Provides the semantics

• Free

• Denotative and connotative information

• Return only semantics, sentics, moodtags, and polarity,

• Provides the sentics

• Provides two fine-grained commands for polarity

• Also accessible online through a python package

ML-Flex

ML-Flex uses machine-learning algorithms to derive models from independent variables, with the purpose of predicting the values of a dependent (class) variable. For example, machine-learning algorithms have long been applied to the Iris data set, introduced by Sir Ronald Fisher in 1936, which contains four independent variables (sepal length, sepal width, petal length, petal width) and one dependent variable (species of Iris flowers = setosa, versicolor, or virginica). Deriving prediction models from the four independent variables, machine-learning algorithms can often differentiate between the species with near-perfect accuracy. One important aspect to consider in performing a machine-learning experiment is the validation…

•Configuring Algorithms

•Creating an Experiment File

•List of Experiment Settings

•Running an Experiment

•List of Command-line Arguments

•Executing Experiments Across Multiple Computers

•Modifying Java Source Code

•Creating a New Data Processor

•Third-party Machine Learning Software

Integrating with Third-party Machine Learning Software

Free

•Configuring Algorithms

•Creating an Experiment File

•List of Experiment Settings

•Flexible processing of multiple data sets

•Delivering experiments across multiple systems

•Integrates with third-party machine learning software

Databionic ESOM

The Databionics ESOM Tools offer many data mining tasks using emergent self-organizing maps (ESOM). Visualization, clustering, and classification of high-dimensional data using databionic principles can be performed interactively or automatically. Its features include ESOM training, U-Matrix visualizations, explorative data analysis and clustering, ESOM classification, and creation of U-Maps. The Databionic ESOM Tools is a suite of programs to perform data mining tasks like clustering, visualization, and classification with Emergent Self-Organizing Maps (ESOM). Features include training of ESOM with different initialization methods, training algorithms, distance functions, parameter cooling strategies, ESOM grid topologies, and neighborhood kernels. The Databionics ESOM Tools also contain…

•Different initialization methods

•Training algorithms

•Distance functions

•Parameter cooling strategies ESOM grid topologies

•Neighborhood kernels.

Free

•Different initialization methods

•Training algorithms

•Distance functions

•Creation of classifier and automated application to new data

•Creation of non-redundant U-Maps

•Training with different initialization methods

MALLET

MALLET known as Machine Learning for LanguagE Toolkit is a Java-based package for statistical natural language processing, document classification, clustering, topic modeling, information extraction, and other machine learning applications to text. Sophisticated tools for document classification are provided - efficient routines for converting text to "features", a wide variety of algorithms (including Naïve Bayes, Maximum Entropy, and Decision Trees), and code for evaluating classifier performance using several commonly used metrics. It also provides tools for sequence tagging for applications such as named-entity extraction from text. Algorithms include Hidden Markov Models, Maximum Entropy Markov Models, and Conditional Random Fields and all…

•Java-based package for statistical natural language processing, document classification, clustering, topic modeling, •Information extraction, and other machine learning applications to text

•Provides tools for sequence tagging

•Routines for transforming text documents into numerical representations

•Add-on package called GRRM

•Open Source Software

Free

•Java-based package for statistical natural language processing, document classification, clustering, topic modeling, •Information extraction, and other machine learning applications to text

•Provides tools for sequence tagging

•Routines for transforming text documents into numerical representations

• Perform document classification easily

• Transform text to numerical representations

• Optimize numerical representations

streamDM

streamDM is an open source software for mining big data streams that uses Spark Streaming, developed at Huawei Noah's Ark Lab. This software is licensed under Apache Software License v2.0. Today, Big Data Stream learning is more challenging because data may not keep the same distribution over the lifetime of the stream. Learning algorithms needs to be very efficient because each example that comes in a stream can be processed once or these examples needs to be summarized with a small memory footprint. Spark Streaming, which makes building scalable fault – tolerant streaming applications easy, is an extension of the…

•Open source software for mining big data streams

•Spark Streaming extension

•Implemented methods CluStream; Hoeffding Decision Trees; bagging; Stream KM ++; HyperplaneGenerator;

Free

•Open source software for mining big data streams

•Spark Streaming extension

•Implemented methods CluStream; Hoeffding Decision Trees; bagging; Stream KM ++; HyperplaneGenerator;

• Open source software for mining big data streams

• Spark Streaming extension

• Implemented methods CluStream;Hoeffding Decision Trees;bagging;Stream KM ++; HyperplaneGenerator.

ADaM

The Algorithm Development and Mining System (ADaM) developed by the Information Technology and Systems Center at the University of Alabama in Huntsville is used to apply data mining technologies to remotely-sensed and other scientific data. The mining and image processing toolkits consist of interoperable components that can be linked together in a variety of ways for application to diverse problem domains. ADaM has over 100 components that can be configured to create customized mining processes. Preprocessing and analysis utilities aid users in applying data mining to their specific problems. New components can easily be added to adapt the system to…

• Component Architecture

• Distributed Services

• Custom Applications

• Grid-enabled Services

Freely used for educational and research purposes by non-profit institutions and US government agencies only. Other organizations are allowed to use ADaM only for evaluation purposes, and any further uses will require prior approval. The software may not be sold or redistributed without prior approval; on site download

• Component Architecture

• Distributed Services

• Custom Applications

•Data Mining and Image Processing Toolkits

•Component Architecture

•Distributed Services

MiningMart

MiningMart can help to reduce this time. The MiningMart project aims at new techniques that give decision-makers direct access to information stored in databases, data warehouses, and knowledge bases. The main goal is to support users in making intelligent choices by offering following objectives: Operators for preprocessing with direct database access; Use of machine learning for the preprocessing; Detailed documentation of successful cases; High quality discovery results; Scalability to very large databases and Techniques that automatically select or change representations. MiningMart’s basic idea is to store best practice cases of preprocessing chains that where developed by experienced users. The data…

• Operators for preprocessing with direct database access

• Use of machine learning for the preprocessing

• Detailed documentation of successful cases

• High quality discovery results

• Scalability to very large databases

• Techniques that automatically select or change representations.

Free

• Operators for preprocessing with direct database access

• Use of machine learning for the preprocessing

• Detailed documentation of successful cases

• Speed up data pre-processing

• Access to detailed documentation of successful cases

• Access to high quality discovery results

Modular toolkit for Data Processing

The Modular toolkit for Data Processing (MDP) is a library of widely used data processing algorithms that can be combined according to a pipeline analogy to build more complex data processing software. From the user’s perspective, MDP consists of a collection of supervised and unsupervised learning algorithms, and other data processing units (nodes) that can be combined into data processing sequences (flows) and more complex feed-forward network architectures. Given a set of input data, MDP takes care of successively training or executing all nodes in the network. This allows the user to specify complex algorithms as a series of simpler…

• Modular toolkit for Data Processing (MDP)

• Implementation of new supervised and unsupervised learning algorithms easy and straightforward

• Valid educational tool

Free

• Modular toolkit for Data Processing (MDP)

• Implementation of new supervised and unsupervised learning algorithms easy and straightforward

• Valid educational tool

• Access simpler data processing steps

• Build more complex data processing software

• Perform parallel implementation of basic nodes and flows

Modular toolkit for Data Processing

Jubatus

Jubatus supports basic tasks including classification, regression, clustering, nearest neighbor, outlier detection, and recommendation. Jubatus is the first open source platform for online distributed machine learning on the data streams of Big Data. Jubatus uses a loose model sharing architecture for efficient training and sharing of machine learning models, by defining three fundamental operations. Update, Mix, and Analyze, in a similar way with the Map and Reduce operations in Hadoop. In addition, Jubatus supports scalable machine learning processing. It can handle 100000 or more data per second using commodity hardware clusters. It is designed for clusters of commodity, shared-nothing hardware.…

•Scalable

•Real-Time

•Difference from Hadoop and Mahout

•Scalable

•Real-Time

•Deep-Analysis

LIBSVM

LIBSVM is a library for Support Vector Machines (SVMs). LIBSVM offers tools such as Multi-core LIBLINEAR, Distributed LIBLINEAR, LIBLINEAR for Incremental and Decremental Learning, LIBLINEAR for One-versus-one Multi-class Classification, Large-scale rankSVM, LIBLINEAR for more than 2^32 instances/features (experimental), Large linear classification when data cannot fit in memory, Weights for data instances. Fast training/testing for polynomial mappings of data, Cross Validation with Different Criteria (AUC, F-score), Cross Validation using Higher-level Information to Split Data, LIBSVM for dense data, LIBSVM for string data, Multi-label classification, LIBSVM Extensions at Caltech, Feature selection tool, LIBSVM data sets, SVM-toy based on Javascript, SVM-toy in 3D,…

• Different SVM formulations

• Efficient multi-class classification

• Cross validation for model selection

• Probability estimates

• Various kernels (including precomputed kernel matrix)

• Weighted SVM for unbalanced data

• Both C++ and Java sources

• GUI demonstrating SVM classification and regression

• Python, R, MATLAB, Perl, Ruby, Weka, Common LISP, CLISP, Haskell, OCaml, LabVIEW, and PHP interfaces. C# .NET code and CUDA extension is available.

• It's also included in some data mining environments: RapidMiner, PCP, and LIONsolver.

• Automatic model selection which can generate contour of cross validation accuracy.

• Free

• Different SVM formulations

• Efficient multi-class classification

• Cross validation for model selection

• Solving SVM optimization problems,

• Solving theoretical convergence,

• Solving multi-class classification,

Arcadia Data Instant

Arcadia Data Instan uses smart acceleration to enable ultra-fast analytics and BI with agile drag-and-drop access. Arcadia Data Instant provides an in-cluster execution engine for scale-out performance on Apache Hadoop and other modern data platforms with no data movement. Arcadia Data Instant supports visualizations on Apache Kafka. Through this, users have an excellent platform to download a kit quickly and get started with exploring visualizations of Kafka topics. The key features offered by Arcadia Data Instant include connect, discover, model, visualise, interact, manage, scale, optimize, security, share and publish, and advanced analytics. The connect feature allows accessing data inside Hadoop…

• The discover feature provides browse data sources, structure and content, with full granularity and transparency

• Set hierarchies and logical datasets, for blending visualizations across sources

• The visualize feature provides easy to use familiar web-based self-service drag and drop authoring

• Flow and funnel algorithms that make it easy to measure correlation

• Create semantic relationships across multiple sources

• Assemble dashboards and applications of visuals that show the user’s work

Contact for pricing

• The discover feature provides browse data sources, structure and content, with full granularity and transparency

• Set hierarchies and logical datasets, for blending visualizations across sources

• The visualize feature provides easy to use familiar web-based self-service drag and drop authoring

• Provides an in-cluster execution engine for scale-out performance on Apache Hadoop

• Achieve linear scalability of records with native in-cluster execution

• Simplifies deployment and monitoring with certified integration

You may like to read: Top Data Mining Software

What are Data Mining Software?

Data mining is the process of identifying patterns, analyzing data and transforming unstructured data into structured and valuable information that can be used to make informed business decisions. Data Mining Software allows the organization to analyze data from a wide range of database and detect patterns.

What are the top Free Data Mining Software?

Orange Data mining, Anaconda, R Software Environment, Scikit-learn, Weka Data Mining, Shogun, DataMelt, Natural Language Toolkit, Apache Mahout, GNU Octave, GraphLab Create, ELKI, Apache UIMA, KNIME Analytics Platform Community, TANAGRA, Rattle GUI, CMSR Data Miner, OpenNN, Dataiku DSS Community, DataPreparator, LIBLINEAR, Chemicalize.org, Vowpal Wabbit, mlpy, Dlib, CLUTO, TraMineR, ROSETTA, Pandas, Fityk, KEEL, ADaMSoft, Sentic API, ML-Flex, Databionic ESOM, MALLET, streamDM, ADaM, MiningMart, Modular toolkit for Data Processing, Jubatus, LIBSVM, Arcadia Data Instant are some of the top free data mining software.

ADDITIONAL INFORMATION

Hello bud, on your data mining softwares witch 1 would u recommend for email mining? Thank you

ADDITIONAL INFORMATION

Do any of these have non-English capabilities?

ADDITIONAL INFORMATION

Hi buddy! Are there any attempts to do cloud based data analytics softwares? I think such a thing can solve the problem Phoenix had mentioned.

ADDITIONAL INFORMATION

I’d like to know if there are any data mining programs which could be used to predict terrorist activities or analyze material movements (shipping, purchases, and orders) to search for indicators of suspicious activity.

I’m a security consultant and advisor, this sort of information would be useful in my consultations.

ADDITIONAL INFORMATION

Hi KR Chin,

To predict any activity you need to know which variables you want to base your prediction on. You also need a historical data to run your predictive analysis and find the possible correlations between different event. I know that somewhere in the US the police uses crime predictions based on historical criminality data (new Orleans if I am not mistaken)…bottom line : you need data to get the info ! have fun 🙂

ADDITIONAL INFORMATION

See AdvancedMiner by Algolytics. They provide free/community version http://algolytics.com/products/advancedminer/