Top 26 Free Software for Text Analysis, Text Mining, Text Analytics

Information is one of the most important resources in the contemporary business environment. It’s hard for any company to succeed without having sufficient information about its customers, employees, and other key stakeholders.

Every day, companies receive unstructured and structured text from various sources such as survey results, tweets, call center notes, phone transcripts, online customer reviews, recorded interactions, emails, and other documents. These sources provide raw text, which is not easy to understand without using the right text analysis tool. It’s possible to perform text analytics manually, but the manual process is ineffective.

Traditional systems use keywords and are unable to read and understand language in emails, tweets, web pages, and text documents. For these reasons, companies use text analytics software to analyze large volumes of text data. The software helps users to gain insights from text data in order to act accordingly.

What are the Top Free Software for Text Analysis, Text Mining, Text Analytics: Apache OpenNLP, Google Cloud Natural Language API, General Architecture for Text Engineering- GATE, Datumbox, KH Coder, QDA Miner Lite, RapidMiner Text Mining Extension, VisualText, TAMS, Natural Language Toolkit, Carrot2, Apache Mahout, KNIME Text Processing, Textable, Apache UIMA, tm- Text Mining Package, Pattern, Gensim, Aika, Distributed Machine Learning Toolkit, LPU, Apache Stanbol, LingPipe are some of the Top Free Software for Text Analysis, Text Mining, Text Analytics.

Text Analytics is the process of converting unstructured text data into meaningful data. The text analysis applications scan a set of documents written in a natural language. These applications model the document set for predictive classification purposes or populate a database or search index with the information extracted.

You may also like to review the Text Analysis, Text Mining, Text Analytics proprietary software

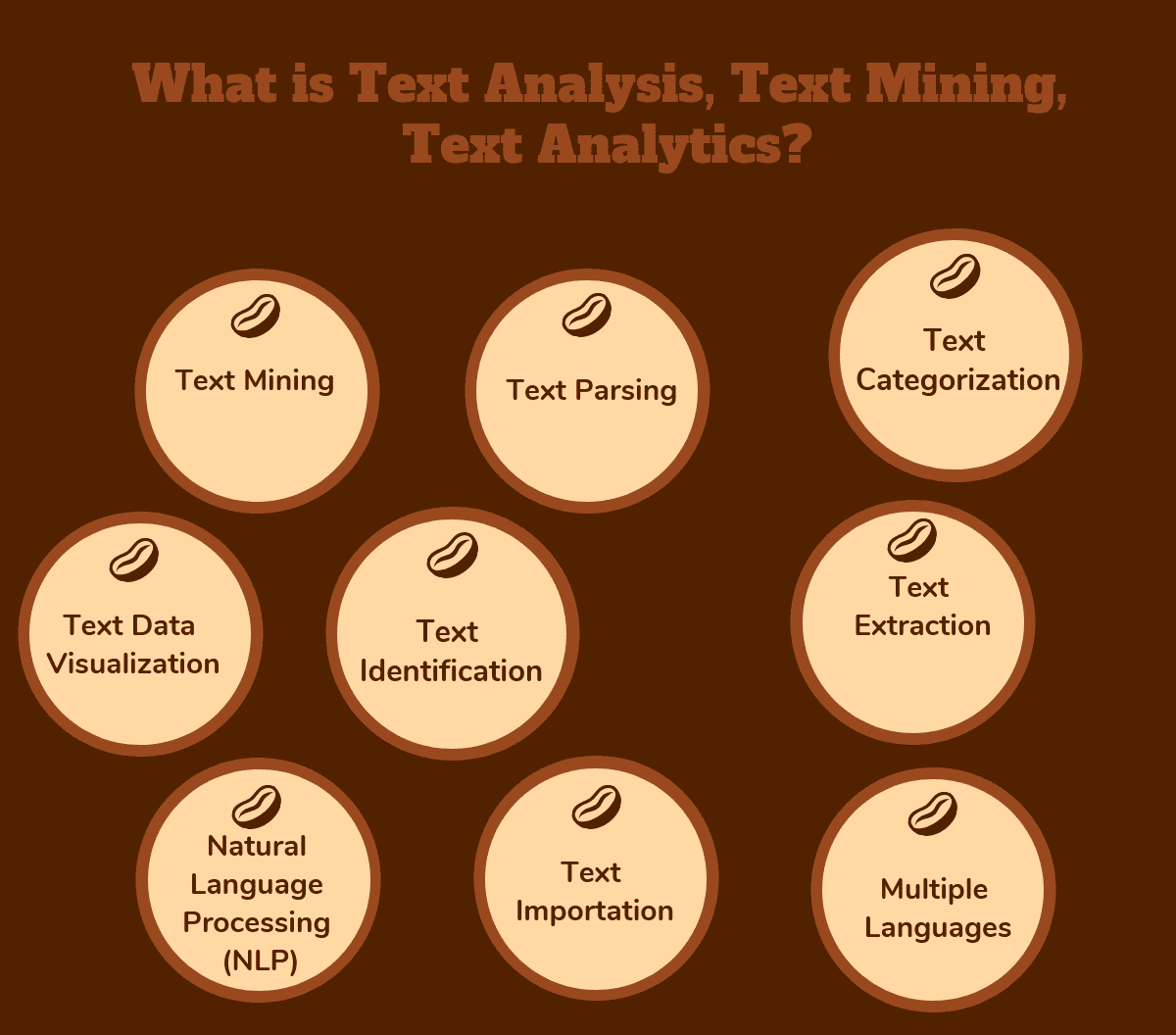

What are Text Analysis, Text Mining, Text Analytics Software?

Text Analytics allows users to gain insights from structured and unstructured data. The software mines text and uses natural language processing (NLP) algorithms to derive meaning from huge volumes of text. Text Analytics is used to measure customer opinions, product reviews, feedback, to provide search facility, sentimental analysis and entity modeling to support fact based decision making. Text analysis software uses many linguistic, statistical, and machine learning techniques.

Organizations receive huge amounts of unstructured and structured text from multiple sources every day and it’s hard to know the meaning and make the most of this text without using the right data mining tools.

Text analytics software is a great tool for unlocking unstructured text to help users understand its meaning. Companies use it to identify patterns, themes, and topics of interests from different sources of information. For example, if a company wants to know more about its customers or employees, it can use text analytics software to mine and analyze data from customer and employee emails, feedback, and tweets. In simple terms, text analytics software turns text data into meaningful information. Organizations need this information to take practical actions.

What is Text Analysis, Text Mining, Text Analytics

- Text Importation: The ability to import text is one of the most important features of text analytics software because users need to retrieve text data from different sources. The best data mining software can import data in different formats such as plain text, HTML, PDF, RTF, CSV, MS Access, and MS Excel.

- Natural Language Processing (NLP): Text analytics software uses natural language processing algorithms to detect language, process text, classify topics, and perform readability assessments. It also provides services like parsing, tokenization, sentence segmentation, named entity extraction, and part-of-speech tagging.

- Text Data Visualization: Another important feature of text analytics software is the ability to visualize processed text. The software leverages machine language and NLP to help users visualize data in different ways for easy interpretation. Software users can explore relationships between terms and use interactive diagrams to display results.

- User-Friendly Interface: The best data analytics applications have a user-friendly and flexible user interface that allows users to perform different tasks. Some of these tasks include merging topics, displaying topics, illustrating terms, managing process-flow diagrams, managing tables, and choosing languages.

- Multiple Languages: Most text analytics applications support various languages including English, Chinese, Dutch, Greek, Thai, French, Finish, Italian and other languages.

Some of the benefits of Text Analysis Software includes:

- Quick analysis of large amounts of unstructured and structured text from different sources.

- Users gain insights from text data and take the necessary action based on the data.

- Companies and use analyzed data to identify, understand and meet the needs of their customers and employees.

- Analyzed data can provide early warning signs if there is an imminent problem.

Top Free Software for Text Analysis, Text Mining, Text Analytics

Apache OpenNLP

The Apache OpenNLP library is a machine learning based toolkit for the processing of natural language text. Apache OpenNLP is an open source Java library which is used to process Natural Language text. OpenNLP provides services such as tokenization, sentence segmentation, part-of-speech tagging, named entity extraction, chunking, parsing, and co-reference resolution, etc. These tasks are usually required to build more advanced text processing services. OpenNLP also included maximum entropy and perceptron based machine learning. The goal of the OpenNLP project will be to create a mature toolkit for the above mentioned tasks. An additional goal is to provide a large…

• Named Entity Recognition (NER)

• Document Categorizer

• Sentence Detection

• Parts of Speech Tagging

•Tokenization

•Lemmatization

•Language Detection

Free

• Named Entity Recognition (NER)

• Document Categorizer

• Sentence Detection

• Advanced text processing services.

•. It supports the most common NLP tasks.

Google Cloud Natural Language API

Google Cloud Natural Language API reveals the structure and meaning of text by offering powerful machine learning models in an easy to use REST API. You can use it to extract information about people, places, events and much more, mentioned in text documents, news articles or blog posts. You can use it to understand sentiment about your product on social media or parse intent from customer conversations happening in a call center or a messaging app. You can analyze text uploaded in your request or integrate with your document storage on Google Cloud Storage. Extract actionable insights on product reception…

• Syntax Analysis

• Entity Analysis

• Sentiment Analysis

• Entity Sentiment Analysis

• Multi-Language

• Integrated REST API

• 0 - 5K units/month - Free

• Pricing based on feature for 5K+ - 1M onwards

• Syntax Analysis

• Entity Analysis

• Sentiment Analysis

• Insights from your customers

• Multimedia, Multi-lingual Support

• Content Classification

Google Cloud Natural Language API

General Architecture for Text Engineering – GATE

General Architecture for Text Engineering - GATE : GATE (General Architecture for Text Engineering) is a Java suite of tools used for all sorts of natural language processing tasks, including information extraction in many languages. The Text Analytics software was developed at the University of Sheffield beginning in 1995. GATE has grown over the years to include a desktop client for developers, a workflow-based web application, a Java library, an architecture and a process. GATE includes components for diverse language processing tasks, such as parsers, morphology, tagging, Information Retrieval tools, Information Extraction components for various languages, and many others. GATE…

• Capable of solving almost any text processing problem

• Creating robust and maintainable text processing workflows

• In active use for all sorts of language processing tasks and applications

Free

• Capable of solving almost any text processing problem

• Creating robust and maintainable text processing workflows

• In active use for all sorts of language processing tasks and applications

• Comprehensiveness

• Scalability

• Openness, extensibility and reusability

General Architecture for Text Engineering – GATE

Datumbox

Datumbox offers a Machine Learning platform composed of 14 classifiers and Natural Language processing functions. Functions include sentiment analysis, topic classification, readability assessment, language detection, and much more. The Datumbox API provides developer access using REST-like RPC-style operations over HTTP POST requests. The API accesses all of the platform functions. Responses are JSON formatted. Access requires a user account and API Key. Datumbox API is a web service which allow to use tools from the website, software or mobile application. The API gives access to all of the supported functions of Datumbox service. Datumbox Web Service uses "REST-Like" RPC-style operations…

•Sentiment Analysis

•Twitter Sentiment Analysis

•Subjectivity Analysis

•Topic Classification

•Spam Detection

•Adult Content Detection

Contact for Pricing

•Readability Assessment

•Language Detection

•Commercial Detection

• Machine learning via API.

• Powerful open source framework.

• Easy to use API.

KH Coder

KH Coder is a free software for quantitative content analysis or text data mining. KH Coder can also be utilized for computational linguistics. KH Coder can also analyze Japanese, English, French, German, Italian, Portuguese and Spanish texts. The input raw texts, can utilize searching and statistical analysis functionalities like KWIC, collocation statistics, co-occurrence networks, self-organizing map, multidimensional scaling, cluster analysis and correspondence analysis. KH Coder KH Coder The features include frequency list, Searching, KWIC concordance, collocation stats, correspondence analysis, multi-dimensional scaling, co-occurrence network and hierarchical cluster analysis. The categories for developing to own categories or dictionaries, frequency list, cross tabulation,…

• Stopwords

• Word frequency list

• Co‐occurrence network of words

• Correspondence analysis of words

• Hierarchical cluster analysis

• Multi-dimensional scaling

Free

• Stopwords

• Word frequency list

• Co‐occurrence network of words

• Quantitative content analysis

• Text mining

• Utilized for computational linguistics.

QDA Miner Lite

QDA Miner Lite is a free computer assisted qualitative analysis software, which can be used for the analysis of textual data such as interview and news transcripts, open-ended responses, etc. as well as for the analysis of still images. It offers basic CAQDAS features such as importation of documents from plain text, RTF, HTML, PDF as well as data stored in Excel, MS Access, CSV, tab delimited text files,importation from other qualitative coding software such as Altas.ti, HyperResearch, Etnograph, from transcription tools like Transana and Transcriber as well as from Reference Information System (.RIS) files. It also provides intuitive coding…

• Importation of documents from plain text, RTF, HTML, PDF as well as data stored in Excel, MS Access, CSV, tab delimited text files,

• Importation from other qualitative coding software such as Altas.ti, HyperResearch, Etnograph, from transcription tools like Transana and Transcriber as well as from Reference Information System (.RIS) files.

• Intuitive coding using codes organized in a tree structure.

• Ability to add comments (or memos) to coded segments, cases or the whole project.

• Fast Boolean text search tool for retrieving and coding text segments.

Free

• Code frequency analysis with bar chart, pie chart and tag clouds.

• Coding retrieval with Boolean (and, or , not) and proximity operators (includes, enclosed, near, before, after).

• Export tables to XLS, Tab Delimited, CSV formats, and Word format

• Easy-to-use

• Analysis of transcripts

• Analysis of interviews

RapidMiner Text Mining Extension

RapidMiner is an open source data mining framework, which offers many operators that can be formed together into a process. A graphical user interface (GUI) allows to connect the operators with each other in the process view. The major function of a process is the analysis of the data which is retrieved at the beginning of the process. There are many packages available for RapidMiner, such as text processing, Weka extension, parallel processing, web mining, reporting extension, series processing, PMML, community, and R extension packages. RapidMiner Text Mining Extension The RapidMiner Text Extension adds all operators necessary for statistical text…

•Statistical text analysis

•Load texts from many different data sources

•Filtering techniques, and finally analyze your text data

•Supports several text formats including plain text, HTML, or PDF as well as other data sources

•Standard filters for tokenization, stemming, stopword filtering, or n-gram generation

Free

•Statistical text analysis

•Load texts from many different data sources

•Filtering techniques, and finally analyze your text data

•Analyze your text data

•Different filtering techniques

RapidMiner Text Mining Extension

VisualText

VisualText is the premier integrated development environment for building information extraction systems, natural language processing systems, and text analyzers. VisualText IDE (Integrated Development Environment) can be used to automatically populate databases with the critical content now buried in textual documents. VisualText has been used to build a number of applications, including accurate analyzers for extracting information from resumes, systems that categorize web pages, an analyzer that monitors a financial transaction chat, email analyzers, selective web spiders, and more. VisualText is a unique integrated development environment (IDE) for developing text analyzers. It tightly integrates our revolutionary NLP++ programming language for rapid…

• Integrated development environment (IDE)

• Rich, integrated GUI tool set and data views

• NLP++ Programming Language

• Hierarchical Knowledge Base Management System

• Automatic Rule Generation (RUG)

• NLP++ integrates code, rules, parse trees, and KB

• Interpreted NLP++ execution

• Compilation of analyzer and KB

• Excellent GUI support for debugging analyzers

Contact for Pricing

• Integrated development environment (IDE)

• Rich, integrated GUI tool set and data views

• NLP++ Programming Language

• Reduces resources needed to build text analyzers

• Accelerates development of deep, accurate analyzers

• Easy to access/modify knowledge

TAMS

TAMS stands for Text Analysis Markup System. It is a convention for identifying themes in texts (web pages, interviews, field notes). It was designed for use in ethnographic and discourse research. TAMS Analyzer is a program that works with TAMS to let you assign ethnographic codes to passages of a text just by selecting the relevant text and double clicking the name of the code on a list. It then allows you to extract, analyze, and save coded information. TAMS Analyzer is open source; it is released under GPL v2. The Macintosh version of the program also includes full support…

• PDF coding and analysis support

• Image (jpg, etc.) coding and analysis support

• Improved layout for video coding and analysis

• New icon set

Free

• PDF coding and analysis support

• Image (jpg, etc.) coding and analysis support

• Improved layout for video coding and analysis

• Identify themes in texts

• Assign ethnographic codes to passages of a text

• Allows you to extract, analyze, and save coded information

Natural Language Toolkit

NLTK is a leading platform for building Python programs to work with human language data. It provides easy-to-use interfaces to over 50 corpora and lexical resources such as WordNet, along with a suite of text processing libraries for classification, tokenization, stemming, tagging, parsing, and semantic reasoning, wrappers for industrial-strength NLP libraries, and an active discussion forum. Thanks to a hands-on guide introducing programming fundamentals alongside topics in computational linguistics, plus comprehensive API documentation, NLTK is suitable for linguists, engineers, students, educators, researchers, and industry users alike. NLTK is available for Windows, Mac OS X, and Linux. Best of all, NLTK…

•Feature structure types

•Parsing feature structure strings

•Feature paths

•Reentrance

•Text classification

Free

•Feature structure types

•Parsing feature structure strings

•Feature paths

• Tokenization

• Stemming

• Tagging

Carrot2

Carrot2 is an Open Source Search Results Clustering Engine. It can automatically organize small collections of documents, e.g. search results, into thematic categories. Carrot2 is a library and a set of supporting applications you can use to build a search results clustering engine. Such an engine will organize your search results into topics, fully automatically and without external kowledge such as taxonomies or preclassified content. Carrot2 integrates very well with both Open Source and proprietary search engines. Apart from the two main specialized document clustering algorithms( Suffix Tree Clustering and Lingo), Carrot2 offers ready-to-use components for fetching search results from…

• Two high-quality document clustering algorithms

• Integrates with public and open source search engines (Solr, Lucene)

• Easy to integrate with Java and non-Java software

• Ships a GUI application for tuning clustering for specific collections

• Ships with a simple web application

• Native C# / .NET API

Free

• Two high-quality document clustering algorithms

• Integrates with public and open source search engines (Solr, Lucene)

• Easy to integrate with Java and non-Java software

•Easily integrates with both Java and non-Java platforms

Apache Mahout

The Apache Mahout project’s goal is to build an environment for quickly creating scalable performant machine learning applications. Apache Mahout is a simple and extensible programming environment and framework for building scalable algorithms and contains a wide variety of premade algorithms for Scala and Apache Spark, H2O, Apache Flink. It also used Samsara which is a vector math experimentation environment with R-like syntax which works at scale. Apache™ Mahout is a library of scalable machine-learning algorithms, implemented on top of Apache Hadoop and using the MapReduce paradigm. While Mahout's core algorithms for clustering, classification and batch based collaborative filtering are…

•Collaborative filtering

•Clustering

•Classification

•Frequent itemset timing

•Distributed Algebraic optimizer

•R-Like DSL Scala API

•Linear algebra operations

Free

•Distributed Algebraic optimizer

•R-Like DSL Scala API

•Linear algebra operations

• Access to extensible programming framework

• Build scalable algorithms

• Access many premade algorithms

KNIME Text Processing

The KNIME Text Processing feature was designed and developed to read and process textual data, and transform it into numerical data (document and term vectors) in order to apply regular KNIME data mining nodes (e.g. for clustering and classification). This feature allows for the parsing of texts available in various formats (e.g. Xml, Microsoft Word or PDF and the internal representation of documents and terms) as KNIME data cells stored in a data table. It is possible to recognize and tag different kinds of named entities such as names of persons and organizations, genes and proteins or chemical compounds, thus…

• Natural language processing (NLP)

• Text mining

• Information retrieval.

• KNIME Analytics Platform - Open Source and Free

• KNIME TeamSpace-2'000€ per user/year

• KNIME Server Lite- 7'500€ for 5 users/year

• KNIME WebPortal- 12'500€ for 5 users/year

• KNIME Server-21'000€ for 5 users*/year

• Natural language processing (NLP)

• Text mining

• Information retrieval.

• Enables to read, process, mine and visualize textual data in a convenient way

Textable

Textable was initally developed as part of a pedagogical innovation project at the University of Lausanne but it has gained access to a new widget named Theatre Classique by simply installing Textable-Prototypes using Orange’s software.This new widget offers a straightforward way of importing theater plays from the Théâtre Classique website. Orange Textable is an open-source add-on bringing advanced text-analytical functionalities to the Orange Canvas data mining software package. It essentially enables users to build data tables on the basis of text data, by means of a flexible and intuitive interface. Textable can import text from keyboard, files, or URLs,process any…

• Basic text analysis

• Quantitative text analysis

• Text recoding

• Extensibility

• Interoperability

• Ease of access

Free

• Basic text analysis

• Quantitative text analysis

• Text recoding

•Powerful

•Visual

•Developed in Academia

Apache UIMA

Unstructured Information Management applications are software systems that analyze large volumes of unstructured information in order to discover knowledge that is relevant to an end user. An example UIM application might ingest plain text and identify entities, such as persons, places, organizations; or relations, such as works-for or located-at UIMA enables applications to be decomposed into components, for example "language identification" => "language specific segmentation" => "sentence boundary detection" => "entity detection (person/place names etc.)". Each component implements interfaces defined by the framework and provides self-describing metadata via XML descriptor files. The framework manages these components and the data flow…

•Infrastructe

•Components

•Frameworks

• Development source code issue management

• Tooling

• Servers

tm – Text Mining Package

Text Mining Infrastructure in R(tm) provides a framework for text mining applications within R. R is a free software environment for statistical computing and graphics which compiles and runs on a wide variety of UNIX platforms, Windows and MacOS. The tm package offers functionality for managing text documents, abstracts the process of document manipulation and eases the usage of heterogeneous text formats in R. The package has integrated database back-end support to minimize memory demands. An advanced meta data management is implemented for collections of text documents to alleviate the usage of large and with meta data enriched document sets.…

• Integrated database back-end .

• Advanced meta data management

• Text document Management.

• Preprocessing and manipulation mechanisms

• Generic Filter Architecture

Free

• Integrated database back-end .

• Advanced meta data management

• Text document Management.

•It be extended to fit custom demands.

•Provides native support for reading in several classic file formats

•Supports the export from document collections to term-document matrices.

Pattern

Pattern is a web mining module for the Python programming language. It has tools for data mining (Google, Twitter and Wikipedia API, a web crawler, a HTML DOM parser), natural language processing (part-of-speech taggers, n-gram search, sentiment analysis, WordNet), machine learning (vector space model, clustering, SVM), network analysis and visualization. The pattern.web module is a web toolkit that contains API's (Google, Gmail, Bing, Twitter, Facebook, Wikipedia, Wiktionary, DBPedia, Flickr, ...), a robust HTML DOM parser and a web crawler. The pattern.en module is a natural language processing (NLP) toolkit for English. Because language is ambiguous (e.g., I can ↔ a…

Data mining tools

Natural language processing

Network analysis

Machine learning

Free

Gensim

Gensim is a FREE Python library that has scalable statistical semantics. It analyzes plain-text documents for semantic structure and retrieve semantically similar documents. In addition, Gensim is a robust, efficient and hassle-free piece of software to realize unsupervised semantic modelling from plain text. It stands in contrast to brittle homework-assignment-implementations that do not scale on one hand, and robust java-esque projects that take forever just to run “hello world”. Gensim is licensed under the OSI-approved GNU LGPLv2.1 license. This means that it’s free for both personal and commercial use, but if users make any modification to gensim that users distribute…

• Scalability

• Efficient implementations

• Platform independent

• Converters & I/O formats

• Robust

• Similarity queries

Free

Aika

Aika is an open source text mining engine that automatically extracts and annotates semantic information into text. For a case where the extracted information is ambigous Aika generates several hypothetical interpretations concerning the meaning of the text and pick the most likely one.Aika algorithm is based on various ideas and approaches from the field of AI such as artificial neural networks, frequent pattern mining and logic based expert systems. Aika is written in Java and distributed under the Apache license. Aika is based on non-monotonic logic, meaning that it first draws tentative conclusions only. In other words, Aika is able…

• Non monotonic logic

• Activation object propagation

• Linguistic modelling

•Propagates activation objects through its network

• Linguistic modelling

• Non monotonic logic

• Activation object propagation

• Linguistic modelling

• Ability to accurately recognize named entities within texts and predict the correct meaning of the name.

• Activation object propagation

• Linguistic modelling

Distributed Machine Learning Toolkit

Distributed Machine Toolkit is an open source project from the Microsoft Company.To generate better accuracies in various distributed Machine learning applications it requires a large number of computation resources which has become a main challenge for common machine learning researchers and practitioners. Microsoft released Microsoft Distributed Machine Learning Toolkit (DMTK), which contains both algorithmic and system innovations. These innovations make machine learning tasks on big data highly scalable, efficient, and flexible. It comprises four components. • LightLDA: an extremely fast and scalable topic model algorithm, with a O(1) Gibbs sampler and an efficient distributed implementation. • Distributed (Multisense) Word Embedding:…

• Bagging

• Column(feature) sub-sample

• Continued train with input GBDT model

• Continued train with the input score file

• Weighted training

• Validation metric output during training

• Multi validation data

• Multi metrics

• Early stopping (both training and prediction)

• Prediction for leaf index

Free

• Column(feature) sub-sample

• Continued train with input GBDT model

• Continued train with the input score file

•Flexibility

•Efficiency

•Big Data

Distributed Machine Learning Toolkit

LPU

LPU (which stands for Learning from Positive and Unlabeled data) is a text learning or classification system that learns from a set of positive documents and a set of unlabeled documents (without labeled negative documents). This type of learning is different from classic text learning/classification, in which both positive and negative training documents are required. Given a set of positive documents and a set of unlabeled documents, the LPU algorithm learns a classifier in two steps: • Step 1 : Identifying a set of reliable negative documents from the unlabeled set. For this step, LPU has three techniques, i.e., spy,…

•Cost sensitive classification

• Ramp loss function

• Hinge loss function

•Can be used for both retrieval or classification.

Apache Stanbol

Apache Stanbol provides a set of reusable components for semantic content management. Apache Stanbol's intended use is to extend traditional content management systems with semantic services. Other feasible use cases include: direct usage from web applications (e.g. for tag extraction/suggestion; or text completion in search fields), 'smart' content workflows or email routing based on extracted entities, topics, etc. In order to be used as a semantic engine via its services, all components offer their functionalities in terms of a RESTful web service API.Apache Stanbol is designed to bring semantic technologies to existing content management systems (CMS). If you have a…

• Analyze textual content, enhance with it with named entities

• Reasoning

• Knowledge Models

• Persistence

•Use locally defined entities

•Semantic Search in Portals

Free

• Analyze textual content, enhance with it with named entities

• Reasoning

•Use locally defined entities

•Direct usage from web applications

•'Smart' content workflows or email routing based on extracted entities or topics.

•Works with custom vocabularies.

LingPipe

LingPipe is tool kit for processing text using computational linguistics. LingPipe is used to do tasks like to find the names of people, organizations or locations in news, automatically classify Twitter search results into categories and suggest correct spellings of queries. LingPipe's architecture is designed to be efficient, scalable, reusable, and robust. Highlights include: Java API with source code and unit tests; multi-lingual, multi-domain, multi-genre models; training with new data for new tasks; n-best output with statistical confidence estimates; online training (learn-a-little, tag-a-little); thread-safe models and decoders for concurrent-read exclusive-write (CREW) synchronization; and character encoding-sensitive I/O.

• Java API with source code and unit tests

• Multi-lingual, multi-domain, multi-genre models

• Training with new data for new tasks

• N-best output with statistical confidence estimates

• Online training (learn-a-little, tag-a-little)

• Thread-safe models and decoders

• Character encoding-sensitive I/O

• Java API with source code and unit tests

• Multi-lingual, multi-domain, multi-genre models

• Training with new data for new tasks

• Find the names of people, organizations or locations in news

• Automatically classify Twitter search results into categories

• Suggest correct spellings of queries

24.S-EM

S-EM is a text learning or classification system that learns from a set of positive and unlabeled examples with no negative examples. It is based on a "spy" technique, naive Bayes and EM algorithm.

25.LibShortText

LibShortText is an open source tool for short-text classification and analysis. LibShortText can handle the classification of titles, questions, sentences, and short messages. It is more efficient than general text-mining packages. On a typical computer, processing and training 10 million short texts takes only around half an hour. An interactive tool for error analysis is included. Based on the property that each short text contains few words, LibShortText provides details in predicting each text.

LibShortText

26.Coh-Metrix

Coh-Metrix is a system for computing computational cohesion and coherence metrics for written and spoken texts. Coh-Metrix allows readers, writers, educators, and researchers to instantly gauge the difficulty of written text for the target audience.

You may also like to review the Text Analysis, Text Mining, Text Analytics proprietary software list:

Top software for Text Analysis, Text Mining, Text Analytics

You may also like to review the Top Qualitative Data Analysis Software proprietary software list:

Top Qualitative Data Analysis Software

You may also like to review the Top Free Qualitative Data Analysis Software software list:

Top Free Qualitative Data Analysis Software

What are Text Analysis, Text Mining, Text Analytics Software?

Text Analytics allows users to gain insights from structured and unstructured data. The software mines text and uses natural language processing (NLP) algorithms to derive meaning from huge volumes of text. Text Analytics is used to measure customer opinions, product reviews, feedback, to provide search facility, sentimental analysis and entity modeling to support fact based decision making.

What are the Top Free Software for Text Analysis, Text Mining, Text Analytics?

Apache OpenNLP, Google Cloud Natural Language API, General Architecture for Text Engineering- GATE, Datumbox, KH Coder, QDA Miner Lite, RapidMiner Text Mining Extension, VisualText, TAMS, Natural Language Toolkit, Carrot2, Apache Mahout, KNIME Text Processing, Textable, Apache UIMA, tm- Text Mining Package, Pattern, Gensim, Aika, Distributed Machine Learning Toolkit, LPU, Apache Stanbol, LingPipe are some of the Top Free Software for Text Analysis, Text Mining, Text Analytics.

ADDITIONAL INFORMATION

Have you looked at the free, open source, web-based ?

ADDITIONAL INFORMATION

DiscoverText is a freemium software with many powerful text analytics features that is free for 30 days and a core set of coding (labeling/annotation) that remain free after the 30 day trial expires.

ADDITIONAL INFORMATION

VisualText at http://www.textanalysis.com has been here for 15 years, and is a one-stop shop for developing the most accurate and complete NLP solutions. Free for non-commercial use (that is, till you are actually deploying or reaping revenue from your analyzers).

NLP++ is one of the only programming languages for NLP.

Check out the new website at http://www.nlpcloud.net

Amnon Meyers

CTO

Text Analysis International, Inc

ADDITIONAL INFORMATION

Hey,

I would like to recommend Twinword’s Text Analysis APIs.

Check out the website for a list of APIs for different functions of text analysis at:

https://www.twinword.com/developer-api.php

Cheers,

Fleur

ADDITIONAL INFORMATION

Coh-Metrix, a theoretically grounded, computational linguistics facility that analyzes texts on multiple levels of language and discourse (Graesser et al., 2014; Graesser, McNamara, Louwerse, & Cai, 2004; D. S. McNamara, Graesser, McCarthy, & Cai, 2014).

ADDITIONAL INFORMATION

Interesting list. I’d like to nominate an addition to this. The Word Doctor is a Voice-To-Text word editor capable of analyzing document content and style. A first of its’ kind, the Word Doctor can be downloaded at: http://www.the-word-doctor.com

ADDITIONAL INFORMATION

Hi guys, I’m looking for an open source system that could provide me with tools to analyze group chat conversation in written format. Any suggestions?

ADDITIONAL INFORMATION

I have used Natural Language Processing on review based texts to find insights…We found that when trying to identify issues or areas of concerns, we wrote queries to identify the Top 25 Negative Noun Tokens in Sentences and include the related sentences after Natural Language Processing. We then grouped those sentences for tagging in an interactive tree (tree of sentences). We were able to identify the top issues affecting consumers, very quickly; because of the refined sample size (Top 25 Tokens). We would repeat this effort with each week of new data…slowly becoming the knowledge experts in the source domain. As the unique issues started to dry up we instituted a dynamic filtering system where every keyword in a sentence became a filter. We could shuffle the results with each click, spinning the results. We also implemented the ability to combine those keywords and flip them for even more complex dynamic filters. And then we also started an automatic favourite keyword identification system so that on subsequent weeks of results, I knew which keywords/favs were able to pull back the targeted results we were after. So for those looking to find the top negative issues, this may be a plan of attack in the identification of issues, something you could include in your own system. I have incorporated these tools into http://text-analyzer.com to see this in action. Hope this helps someone when trying to identify the insights from customer feedback.

ADDITIONAL INFORMATION

Hello, we have recently launched our own semantic text analysis service, Digitalowl.

You might want to consider adding it to the list 🙂

ADDITIONAL INFORMATION

What happened to CAT?

https://cat.texifter.com/

It was in the Top 4 for many years, with huge numbers of scholarly use cases:

https://discovertext.com/2018/03/31/scholarly-citations-of-the-coding-analysis-toolkit/

Why was it removed from the list?

~Stu

ADDITIONAL INFORMATION

I have recently created a new text analytics and that uses referencial framework to predict topics. it is free to use

vizrefra.com

ADDITIONAL INFORMATION

NelSenso.Net is a collection of text-mining apps very useful for reading, writing and studying more quickly through text mining algorithms:

– Summazer is the free app capable of “squeezing” a text or a web page and extracting its juice, that is the sentences with the highest information content, to generate an automatic online summary. It shows, also, the Saliency Score Distribution and the Quality Text Indicator.

– Abstrazer is the free app that can generate an automatic “abstract” of an article or web page.

– IRezerthe web application that allows the automatic extraction of keywords (tags/hashtags) and the most salient sentences of a text or web page. It orders results by ssaliency score o reading order.

– Clustezer is the app that allows the automatic extraction of the main topics of a text or web page through the automatic classification of the keyphrases contained in the text.

NelSenso.Net

ADDITIONAL INFORMATION

Information about free text analysis software is very helpful, thank you!

I also want to add useful information about this software worktime.com. Provides the ability to control time for more efficient work. You can analyze the time spent on writing texts.