Top 9 Column-Oriented Databases

Organizations use data repositories to support business operations and decisions. In the contemporary business world, businesses get data from different sources such as in-house and cloud-based data repositories. This data is stored in databases to serve different purposes including storing, managing, and retrieving data.

With the increasing number of organizations that deal with large volumes of data on a daily basis, it is important to deploy a database that is highly effective. In most cases, database systems store and retrieve data from physical drives with mechanical read/write heads. The data retrieval process involves moving the head and consumes a lot of time if the organization does not use an effective database system.

This is one of the major problems faced by businesses that handle highly analytical or query-intensive operations. A suitable solution to this problem is to use wide columnar store databases.

What are the Top Column-Oriented Databases: MariaDB, CrateDB, ClickHouse, Greenplum Database, Apache Hbase, Apache Kudu, Apache Parquet, Hypertable, MonetDB are some of the Top Column-Oriented Databases.

What are Column-Oriented Databases?

Wide Columnar Store databases stores, data in records in a way to hold very large numbers of dynamic columns. The column names as well as the record keys are not fixed in Wide Columnar Store databases.A column-oriented database serializes all of the values of a column together, then the values of the next column, and so on.In the column-oriented system primary key is the data, mapping back to rowids.

Wide columnar store databases have different names including column databases, columnar databases, column-oriented databases, and column family databases. As the name suggests, columnar databases store data by column, unlike traditional relational databases. They organize related facts into columns with many subgroups and the record keys and columns are not fixed.

Several columns make a column family with multiple rows and the rows may not have the same number of columns. In simple terms, the information stored in several rows in an ordinary relational database can fit in one column in a columnar database. The columns can also have different names and datatypes.

Moreover, each column does not span beyond its row. Wide columnar databases are mainly used in highly analytical and query-intensive environments. This includes areas where large volumes of data items require aggregate computing. For example, wide columnar databases are suitable for data mining, business intelligence (BI), data warehouses, and decision support.

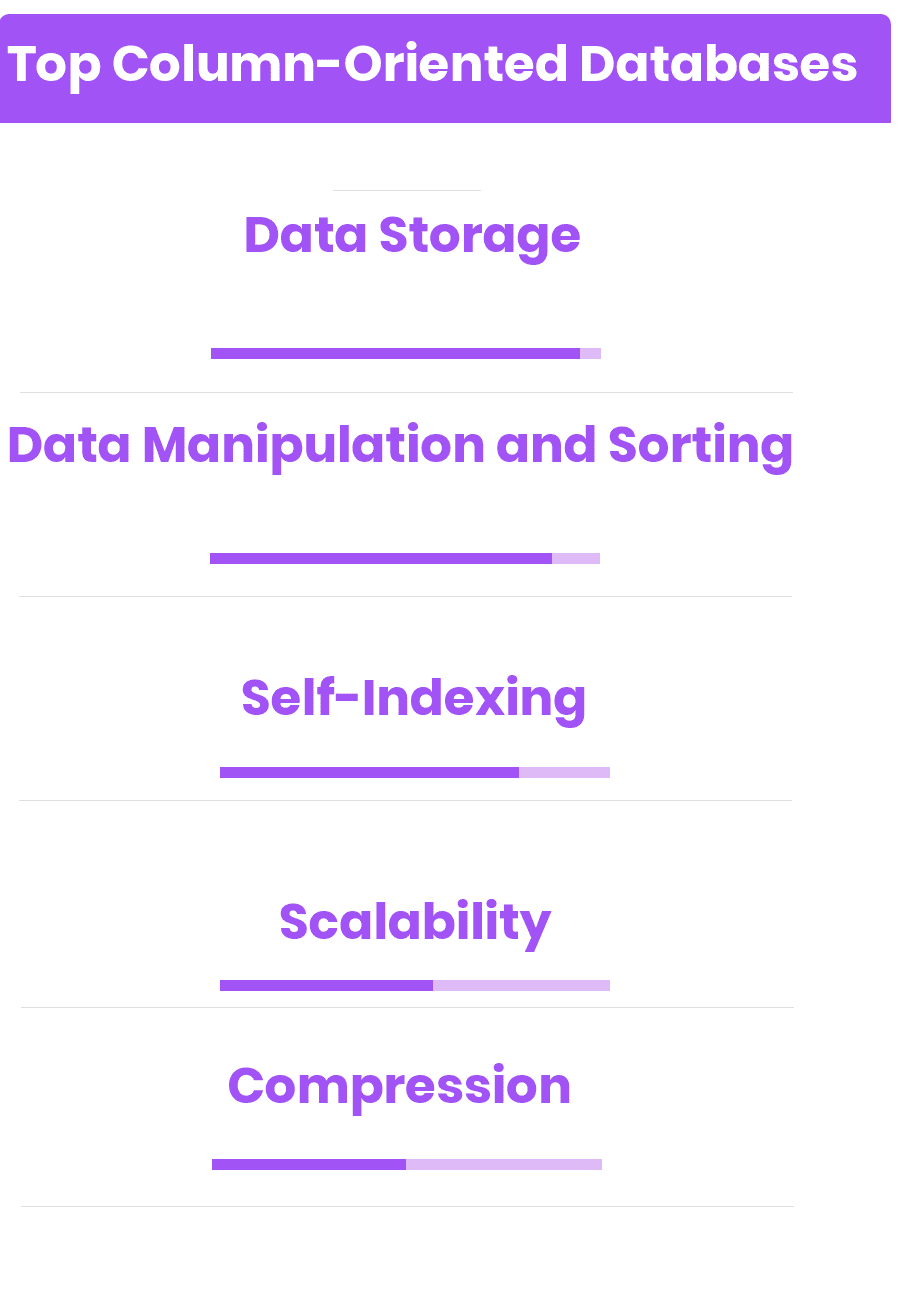

- Data Storage: Like all database systems, wide columnar databases enable users to store data. They use a durable data storage system to prevent data loss and ensure durability. Business users can use them to store their customers’ details such as names, email addresses, gender, and age.

- Data Manipulation and Sorting: Database users can sort and manipulate data directly from a columnar database and there is no need to rely on the application.

- Self-Indexing: Some columnar databases can index columns to ensure effective data processing, querying, and analysis.

- Scalability: Columnar databases are highly scalable because they store data in columns rather than rows. The columns are easy to scale so they can store large volumes of data. This feature also allows users to spread data across many computing nodes and data stores.

- Compression: Columnar databases are highly compressed compared to conventional relational databases that store data by row. They allow users to optimize storage size.

Top Column-Oriented Databases

Some of the benefits include:

- Columnar databases load extremely fast compared to conventional databases. They can load millions of rows in seconds and quickly perform columnar operations such as SUM and AVG.

- Wide columnar databases can store large volumes of non-volatile information for a very long time. They are suitable for applications that handle large datasets and large data storage clusters.

- Since columnar databases are self-indexing, they use less disk space than traditional relational databases.

Top Column-Oriented Databases

MariaDB

MariaDB is a powerful database server that is made from MySQL developers. MariaDB provides a platform for getting structured information from given data by use of a broad range of applications that range from websites to banking. MariaDB is simply a placement for MySQL that is enhanced. MariaDB provides a fast, robust, and scalable database server with a full grained ecosystem of plugins, storage engines, and several other database tools that enable MariaDB to be versatile for a wide range of uses cases. MariaDB provides an excellent platform for a SQL interface for data accessing as it is developed as…

• MariaDB 10.2.8

• MariaDP Galera Cluster

• MariaDB Galera Cluster 10.0.31

• Connector Java 2.0.2

• MariaDB Galera Cluster 10.0.30

• Connector J 1.5.9

• MariaDB 10.1.21

• MariaDB 10.2.1 Alpha

• MariaDB enterprise Advanced - $5000/server/year

• MariaDB Enterprise Cluster - $6500/server/year

• MariaDB Enterprise Standard - $2500/Server/year

• MariaDB Enterprise Galera Cluster - $6500/node/year

CrateDB

CrateDB is a distributed SQL database built on top of a NoSQL foundation. It combines the familiarity of SQL with the scalability and data flexibility of NoSQL, enabling developers to: Use SQL to process any type of data, structured or unstructured; Perform SQL queries at real time speed, even JOINs and aggregates and scale simply. CrateDB’s distributed SQL query engine features columnar field caches, and a more modern query planner. These give CrateDB the unique ability to perform aggregations, JOINs, sub-selects, and ad-hoc queries at in-memory speed. CrateDB also integrates native, full-text search features, which enable users to store and…

• Dynamic schemas: Add columns anytime without slowing performance or downtime

• Geospatial queries: Store and query geographical information using the geo_point and geo_shape types

• SQL with integrated search for data and query versatility

• Container architecture and automatic data sharding for simple scaling

• Indexing optimizations enable fast, complex cybersecurity analyses

• Performance-monitoring tools

Contact for Pricing

ClickHouse

ClickHouse is an open-source Column-oriented DBMS for online analytical processing. ClickHouse's performance exceeds comparable column-oriented DBMS currently available on the market. It processes hundreds of millions to more than a billion rows and tens of gigabytes of data per single server per second. ClickHouse is simple and works out-of-the-box. As well as performing on hundreds of node clusters, this system can be easily installed on a single server or even a virtual machine. ClickHouse uses all available hardware to its full potential to process each query as fast as possible. The peak processing performance for a single query stands at…

• Vector engine: Achieve high CPU performance

• Real-time data updates: There is no locking when adding data

• True column-oriented storage

• Parallel and distributed query execution

• State-of-the-art algorithms

• Pluggable external dimension tables

• Open source

Greenplum Database

Greenplum Database is an advanced, fully featured, open source data platform. It provides powerful and rapid analytics on petabyte scale data volumes. Uniquely geared toward big data analytics, Greenplum Database is powered by the world’s most advanced cost-based query optimizer delivering high analytical query performance on large data volumes. The Greenplum Database architecture provides automatic parallelization of all data and queries in a scale-out, shared nothing architecture. High-performance loading uses MPP technology. Loading speeds scale with each additional node to greater than 10 terabytes per hour, per rack. The query optimizer available in Greenplum Database is the industry’s first cost-based…

• Analytical functions: t-statistics, p-values and naïve Bayes for advanced in-database anlytics

• Workload management enables priority adjustment of running queries

• Database performance monitor tool allows system administrators to pinpoint the cause of network issues, and separate hardware issues from software issues

• Polymorphic data storage and execution

• Trickle micro-batching allows data to be loaded at frequent intervals

• Comprehensive SQL-92 and SQL-99 support with SQL 2003 OLAP extensions

• Open source

Apache Hbase

HBase is a non-relational database meant for massively large tables of data that is implicitly distributed across clusters of commodity hardware. HBase provides “linear and modular scalability” and a variety of robust administration and data management features for your tables, all hosted atop Hadoop’s underlying HDFS file system. Designed to support queries of massive data sets, HBase is optimized for read performance. For writes, HBase seeks to maintain consistency. A write operation in HBase first records the data to a commit log (a "write-ahead log"), then to an internal memory structure called a MemStore. When the MemStore fills, it is…

• Automatic and configurable sharding of tables

• Strictly consistent reads and writes

• Query predicate push down via server side Filters

• Extensible jruby-based (JIRB) shell

• Automatic failover support between RegionServers

• Convenient base classes for backing Hadoop MapReduce jobs with Apache HBase tables

Apache Kudu

Kudu is a columnar storage manager developed for the Apache Hadoop platform. Kudu shares the common technical properties of Hadoop ecosystem applications: it runs on commodity hardware, is horizontally scalable, and supports highly available operation. Kudu internally organizes its data by column rather than row. Columnar storage allows efficient encoding and compression. With techniques such as run-length encoding, differential encoding, and vectorized bit-packing, Kudu is as fast at reading the data as it is space-efficient at storing it. Columnar storage also dramatically reduces the amount of data IO required to service analytic queries. Using techniques such as lazy data materialization…

• In-memory columnar execution path

• Advanced in-process tracing capabilities

• Extensive metrics support

• Watchdog threads which check for latency outliers

• Columnar storage allows efficient encoding and compression

• Lazy data materialization and predicate pushdown

• Open source

Apache Parquet

Apache Parquet is a columnar storage format available to any project in the Hadoop ecosystem, regardless of the choice of data processing framework, data model or programming language. Parquet is a self-describing data format that embeds the schema or structure within the data itself. This results in a file that is optimized for query performance and minimizing I/O. Parquet also supports very efficient compression and encoding schemes. Apache Parquet is designed to bring efficient columnar storage of data compared to row-based files like CSV. Multiple projects have demonstrated the performance impact of applying the right compression and encoding scheme to…

• Automatic dictionary encoding enabled dynamically for data with a small number of unique values

• Run-length encoding (RLE)

• Per-column data compression accelerates performance

• Queries that fetch specific column values need not read the entire row data thus improving performance

• Dictionaries/hash tables, indexes, bit vectors

• Repetition levels

Contact for Pricing

Hypertable

Hypertable is a high performance, open source, massively scalable database modeled after Bigtable, Google's proprietary, massively scalable database. Hypertable was designed for the express purpose of solving the scalability problem, a problem that is not handled well by a traditional RDBMS. Hypertable delivers maximum efficiency and superior performance over the competition which translates into major cost savings. Tables in Hypertable can be thought of as massive tables of data, sorted by a single primary key, the row key. Like a relational database, Hypertable represents data as tables of information. Each row in a table has cells containing related information, and…

• Database integrity check/repair tool

• High Performance: C++ implementation for optimum performance

• Comprehensive Language Support: Java, Node.js, PHP, Python, Perl, Ruby,

• Bypassing commit log (wal) writes provides a way to significantly increase bulk loading performance

• Cluster administration tool

• CellStore salvage tool

• Open source

MonetDB

MonetDB is a full-fledged relational DBMS that supports SQL:2003 and provides standard client interfaces such as ODBC and JDBC. It is designed to exploit the large main memories of modern computers during query processing. A rich collection of linked-in libraries provides functionality for temporal data types, math routine, strings, and URLs. MonetDB is built on the canonical representation of database relations as columns, a.k.a. arrays. They are sizeable entities -up to hundreds of megabytes- swapped into memory by the operating system and compressed on disk upon need. MonetDB excels in applications where the database hot-set - the part actually touched…

• Enterprise level features such as clustering, data partitioning, and distributed query processing

• Management of External Data (SQL/MED)

• Information and Definition Schemas

• Routines and Types using the Java Language

• Database cracking is a technique that shifts the cost of index maintenance from updates to query processing

• Vertical fragmentation ensures that the non-needed attributes are not in the way

• Open source

MonetDB What are Column-Oriented Databases? Wide Columnar Store databases stores, data in records in a way to hold very large numbers of dynamic columns. The column names as well as the record keys are not fixed in Wide Columnar Store databases.A column-oriented database serializes all of the values of a column together, then the values of the next column, and so on.In the column-oriented system primary key is the data, mapping back to rowids. What are the Top Column-Oriented Databases? MariaDB, CrateDB, ClickHouse, Greenplum Database, Apache Hbase, Apache Kudu, Apache Parquet, Hypertable, MonetDB are some of the Top Column-Oriented Databases.

By clicking Sign In with Social Media, you agree to let PAT RESEARCH store, use and/or disclose your Social Media profile and email address in accordance with the PAT RESEARCH Privacy Policy and agree to the Terms of Use.